The Reliability Gap in Enterprise AI Systems

Bridge the gap between AI output and verifiable accuracy. A multi-layered framework for risk management, HITL oversight, and auditability in regulated industries.

Published on Feb 6, 2026

Where does your AI strategy stand?

Our free assessment scores your readiness across 8 dimensions in under 5 minutes.

The Reliability Gap in Enterprise AI Systems

In regulated sectors, the stakes for accuracy are absolute. A single non-compliant recommendation from an AI in finance can trigger intense regulatory scrutiny and significant fines. This reality exposes a fundamental gap between a large language model's plausible-sounding output and the verifiable accuracy required by enterprises. The core issue is not simply about a model's memory but its inherent inability to perform external fact-checking or grasp situational context.

The challenge of overcoming AI context window limitations is often misunderstood. Think of a brilliant student who has read thousands of books but has zero real-world experience and no access to a live, verified database. The knowledge is vast but untethered from the present reality. This disconnect creates specific and costly failure modes for businesses that depend on precision.

We see these failures manifest in several ways:

- Subtle Data Misinterpretation: An AI summarizing a quarterly earnings report might generate a confident overview but completely miss the critical nuance buried in a footnote. This small oversight can lead to a deeply flawed financial projection that executives then act upon.

- Regulatory Guideline Violations: An AI tasked with generating marketing copy for a new investment product could inadvertently create a forward-looking promise that violates FINRA rules. In healthcare, it might suggest a patient communication that breaches HIPAA privacy standards, creating immediate legal exposure.

- Costly Hallucinations: Perhaps the most dangerous failure is when a model invents a legal precedent to support a contract analysis or cites a non-existent medical study to validate a treatment summary. These confident falsehoods create massive legal and reputational risks that are difficult to unwind.

The objective, therefore, is not to build a bigger context window. It is to engineer a system that guarantees verifiable accuracy. True AI reliability in regulated industries comes from a system designed for verification, not just generation. This requires moving beyond the model itself and building a comprehensive framework around it.

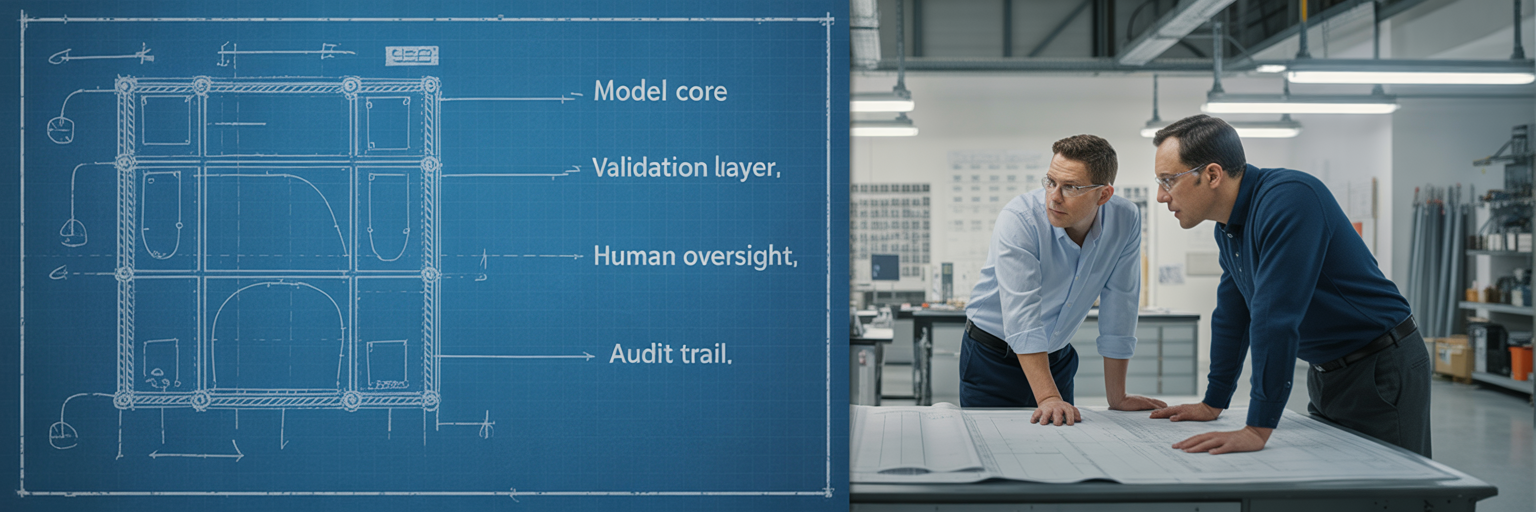

A Multi-Layered Risk Management Framework

The path to reliable AI in the enterprise is not about finding a "perfect" model. It is about building a structured, engineering-driven system for verification. A systematic AI risk management framework provides a disciplined approach to managing these challenges across the entire lifecycle. According to the National Institute of Standards and Technology, whose AI RMF 1.0 emphasizes this point, continuous risk management is essential for creating trustworthy AI. This framework consists of distinct defensive layers that work together to ensure outputs are not just plausible, but correct.

Layer 1: Model-Level Safeguards with RAG

The first line of defense is Retrieval-Augmented Generation (RAG). This technique grounds the AI's responses in a curated, private knowledge base, such as your company's internal compliance manuals, the latest regulatory statutes, or proprietary market data. Instead of allowing the model to answer from its generalized training, RAG forces it to first retrieve relevant, factual information from your trusted repository. This directly mitigates hallucinations by compelling the model to cite its sources from a controlled environment, turning it from a creative storyteller into a fact-based synthesizer.

Layer 2: System-Level Controls and Business Rules

The second layer wraps the AI in an application that enforces deterministic business logic. This layer acts as a non-negotiable gatekeeper. For example, an AI-generated investment summary can be automatically checked by a rules engine to ensure it contains mandatory FINRA disclosures before a human ever sees it. If a required disclosure is missing, the output is rejected or flagged. These controls are not probabilistic; they are absolute, ensuring that every output adheres to predefined business and compliance standards.

| Layer | Purpose | Key Technique | Primary Risk Mitigated |

|---|---|---|---|

| Layer 1: Model-Level Safeguards | Ground AI responses in factual, verified data | Retrieval-Augmented Generation (RAG) | Factual inaccuracies and hallucinations |

| Layer 2: System-Level Controls | Enforce deterministic business and compliance rules | Rules engines, validation APIs | Regulatory non-compliance and business logic violations |

| Layer 3: Human Oversight | Provide expert judgment for ambiguous or high-stakes cases | Human-in-the-Loop (HITL) interfaces | Nuanced errors, ethical blind spots, and novel risks |

This table outlines the distinct functions of each layer in the reliability framework. Each layer addresses a different type of risk, working together to create a comprehensive and defensible system.

This multi-layered approach represents a critical paradigm shift. The focus moves from blindly trusting a probabilistic model to verifying the deterministic output of a complete system. This is the foundation of a successful AI strategy implementation.

Integrating Human-in-the-Loop for Critical Oversight

In regulated fields, the idea of full automation is often indefensible. The nuances of law, finance, and healthcare demand expert judgment that models alone cannot provide. This is where Human-in-the-Loop (HITL) becomes a critical governance layer. Global organizations agree; as highlighted in a report from the OECD on advancing accountability in AI, human oversight is essential for ensuring accountability, especially in high-stakes scenarios. Effective human in the loop AI compliance is not about slowing things down but about adding an essential layer of validation.

Enterprises can implement several distinct HITL models depending on the risk profile of the task:

- Review and Approve: The AI acts as a highly efficient drafter. For instance, it can prepare an initial suspicious activity report for a bank by summarizing transaction data. A human compliance officer then provides the final review, makes any necessary adjustments, and submits the report. This model prioritizes accuracy and accountability above all.

- Exception Handling: The AI autonomously processes standard, low-risk tasks, such as categorizing routine insurance claims. However, it is programmed to automatically flag complex, ambiguous, or high-value cases for a human expert. This approach optimizes efficiency without sacrificing control over critical decisions.

- AI Co-pilot: The AI assists a human decision-maker by surfacing relevant data, highlighting potential compliance conflicts from internal documents, and suggesting actions. The human retains full authority, using the AI as an intelligent assistant to enhance their judgment, not replace it.

For any of these models to work, the user interface is paramount. The UI must transparently present the AI's reasoning, its confidence score, and the specific source data retrieved via RAG that informed its conclusion. This empowers the human operator to make fast, informed, and defensible judgments. Without a well-designed human-machine interface, effective AI governance is simply not possible.

Designing for Unquestionable Auditability and Transparency

The frameworks and oversight layers we have discussed all serve a final, non-negotiable requirement: regulatory accountability. For auditors and regulators, the process behind an AI-assisted decision is just as critical as the outcome itself. This is why AI auditability and transparency must be designed into the system from day one. An "audit-ready" system is one that can prove not just what it did, but why it did it.

A complete AI audit trail must log several key data points for every single transaction. Anything less is an incomplete record.

- The original user prompt or the automated system trigger that initiated the process.

- The specific documents and data chunks retrieved by the RAG system to inform the response.

- The model's raw, unaltered output before any system-level checks are applied.

- The results of all automated validation checks from the system-level controls, noting passes and failures.

- A record of the final action, including any modifications made by a human operator in a HITL workflow, complete with their identity and an immutable timestamp.

In this context, "explainability" is not about deciphering the complex mathematics of a neural network. It is about creating a traceable, step-by-step lineage for every decision. This demonstrates a clear, logical path from input to output through verifiable stages. A system designed to produce such immutable logs for every process ensures that this lineage is always available. For an AI assessing an insurance claim, the audit trail must show exactly which policy clauses were referenced, what customer data was analyzed, and whether the final payout decision was fully automated or required approval from a human agent. This creates an unquestionable record for any compliance review.

Maintaining Compliance in Dynamic Regulatory Environments

Building a compliant AI system is not a one-time project. A system that is perfectly compliant today can become non-compliant tomorrow due to model drift, new legislation, or a shift in business rules. For example, a new state-level data privacy law could instantly invalidate existing data handling processes. Future-proofing your AI investment requires building for adaptation from the start.

The most effective strategy is a modular architecture. In this design, the knowledge base used by RAG and the compliance logic in the rules engine can be updated independently of the core LLM. This agility is a cornerstone of modern enterprise AI governance models. It allows an organization to respond to regulatory changes in hours, not months, by simply updating a rule set or adding new documents to the knowledge base without re-engineering the entire application.

This architecture must be paired with continuous monitoring and automated testing. We recommend maintaining a "golden dataset," which is a curated set of test cases representing your most critical compliance scenarios. This dataset should be run against the AI system on a regular basis to detect any performance degradation, behavioral drift, or new, unforeseen failure modes. It acts as an early warning system, alerting you to issues before they impact operations.

Ultimately, ensuring AI reliability in regulated industries is an ongoing operational discipline. It requires a durable synthesis of advanced technology, rigorous processes, and robust governance. For organizations seeking to understand their current posture and build for the future, an initial assessment can provide a clear roadmap for establishing and maintaining trust in their AI systems.

Ready to move forward?

Stop reading about AI governance. Start implementing it.

Find out exactly where your AI strategy will fail — and get a specific roadmap to fix it.