The Strategic Case for On-Premise AI

Why regulated enterprises need sovereign on-premise AI: data jurisdiction, secure architecture, phased deployment, governance-by-design, and orchestration for scale.

Published on Jan 9, 2026

Where does your AI strategy stand?

Our free assessment scores your readiness across 8 dimensions in under 5 minutes.

The Strategic Case for On-Premise AI

In the United States, a foundational legal principle dictates that data is subject to the jurisdiction where it resides. For enterprises in regulated sectors like finance and healthcare, this is not a trivial detail. It means that compliance with SEC regulations or HIPAA is intrinsically tied to where your data lives and breathes. This reality makes on-premise or private cloud AI deployment a non-negotiable starting point, transforming it from a technical choice into a core business strategy.

This is the essence of data sovereignty in AI. It’s the assertion of control over your most valuable asset: your data. This approach aligns with frameworks outlined by bodies like the National Institute of Standards and Technology (NIST), which emphasize control and governance over data assets. When you send proprietary information to a third-party API, you are effectively ceding a degree of that control. An on-premise model, by contrast, allows for a level of customization and fine-tuning on your unique datasets that is simply impossible with a generic, one-size-fits-all API.

Beyond compliance, a sovereign AI strategy mitigates significant supply chain risks. Relying on external API providers introduces vulnerabilities tied to their stability, security posture, and even geopolitical shifts. What happens if your provider changes its terms, suffers a breach, or is affected by international policy? An AI system operating within your own infrastructure is insulated from these external shocks.

Ultimately, a sovereign AI system becomes a form of intellectual property. It is a durable competitive advantage engineered from your own data and expertise, not a rented tool available to all your competitors. This strategic imperative is why many leaders in regulated U.S. sectors are seeking specialized guidance on their AI journey.

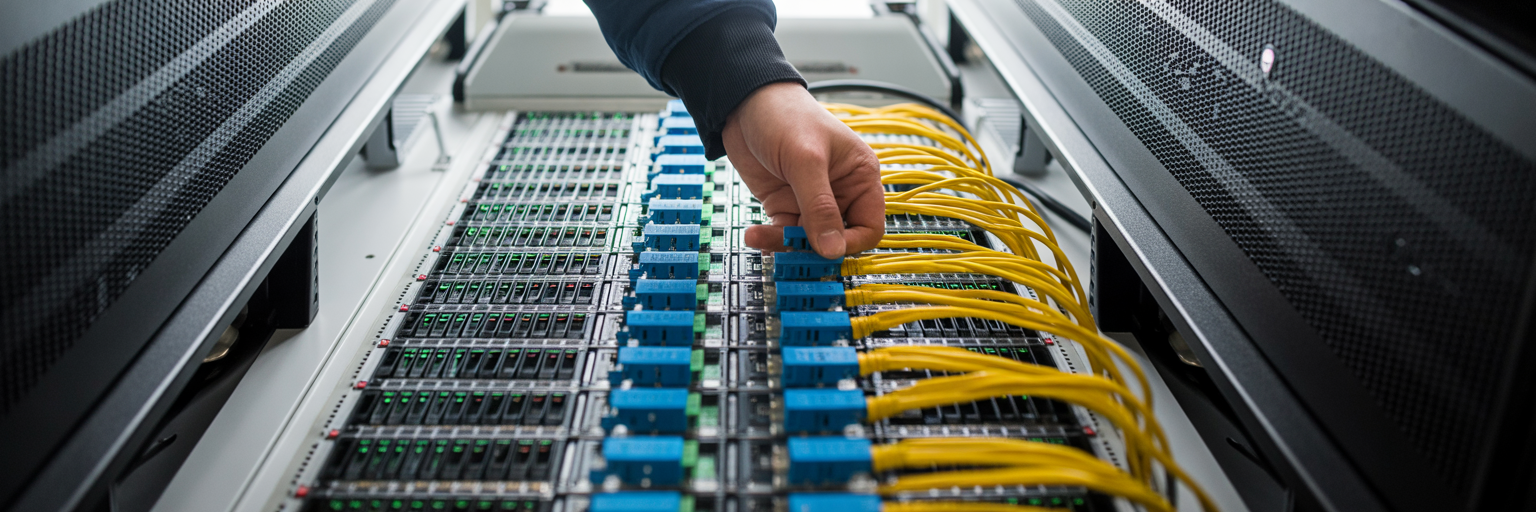

Architecting Your Secure AI Environment

With the strategic "why" established, the focus shifts to the architectural "how." Before a single line of code is written, you must design a secure and future-proof environment. This blueprinting phase is critical for creating a digital fortress to house your AI. This aligns with the growing recognition of sovereign AI, where, as major technology leaders are now championing, data and models are managed within organizational borders to ensure compliance and security.

Building this foundation involves several deliberate steps:

- Infrastructure Readiness Assessment: The first move is to audit your existing on-premise or Virtual Private Cloud (VPC) resources. This isn't just a server count. You need to analyze GPU availability, storage input/output operations per second (IOPS), and network latency. Think of it as surveying the land before building a house. It reveals the hardware and performance gaps that must be filled before construction begins. A thorough infrastructure readiness assessment is the critical first step to de-risk your project and ensure a smooth deployment.

- Airtight Network Configuration: Your AI environment cannot be an open-door office. It must be a sealed vault. This means configuring strict firewall rules to control all inbound and outbound traffic. Network segmentation is used to isolate the AI systems from the rest of your corporate network, containing any potential issues. Implementing intrusion detection systems ensures that your AI deployment within firewall is actively monitored for unauthorized activity.

- Model-Agnostic Framework Selection: It is tempting to build your entire strategy around a single, popular large language model. This is a strategic error. A model-agnostic architecture gives you the flexibility to use the best tool for each specific job, whether it's Llama 3 for content generation or a specialized Granite model for code analysis. More importantly, it allows you to swap models as better, more efficient ones inevitably emerge, preventing vendor lock-in.

- Foundational Security Protocols: Security cannot be an afterthought. From day one, your architecture must include end-to-end encryption for data at rest and in transit. Strict Identity and Access Management (IAM) policies with role-based access control (RBAC) ensure that only authorized personnel can access sensitive models and data. Finally, setting up comprehensive, continuous logging creates the immutable record needed for future audits and troubleshooting.

A Phased Implementation for On-Premise AI

With a secure architecture designed, the project moves into active implementation. This practical on-premise AI deployment guide breaks the process into four distinct and manageable phases, transforming the blueprint into a functional, intelligent system.

Phase 1: Strategic Discovery and Data Engineering

This is where the rubber meets the road. The process begins by identifying high-impact business use cases where AI can deliver measurable value, not just novelty. Once a target is selected, the focus shifts to your proprietary data. This is the fuel for your custom AI. Data engineering involves cleansing, normalizing, and, where necessary, anonymizing datasets to create a high-quality, secure foundation for model training.

Phase 2: Custom Model Engineering and Fine-Tuning

Here, a generic base model is transformed into a specialized corporate asset. A foundational model is selected based on the use case, and then it is meticulously fine-tuned on the prepared enterprise data from Phase 1. This is the step that teaches the AI your company's unique language, processes, and context. It’s the difference between an assistant who knows general facts and one who knows your business inside and out.

Phase 3: Secure Deployment and Integration

The fine-tuned model is now ready to be deployed within the secure environment architected earlier. This phase involves using containerization technologies like Docker and Kubernetes to package the AI model and its dependencies. This ensures consistency and portability. Secure API endpoints are then created, allowing your existing business applications to communicate with the AI system in a controlled and monitored way, bringing its intelligence into daily workflows.

Phase 4: Building Agentic Workflows for Automation

This advanced phase moves beyond single-task models to create sophisticated, automated business processes. This is where a governed AI agent deployment strategy comes to life. Instead of one model performing one task, you build multi-agent systems where different AIs collaborate. This is where an internal orchestration framework becomes essential for constructing these sophisticated, self-healing workflows. For example, a prime use case is in SAP modernization, where one agent can be tasked with refactoring legacy code while another validates the output for accuracy before any changes are committed.

Navigating On-Premise Deployment Challenges

An on-premise AI initiative is a significant undertaking, and it's important to approach it with a clear understanding of the potential hurdles. Acknowledging these challenges is the first step toward mitigating them effectively. The goal is to build and maintain secure enterprise AI systems by anticipating friction points before they disrupt your project.

The primary challenges include the high upfront capital expenditure for GPUs and the difficulty of capacity planning. Unlike the cloud’s pay-as-you-go model, on-premise requires careful forecasting. Ensuring high availability and low-latency performance also adds an operational burden, as your team becomes responsible for redundancy and failover. Furthermore, finding and retaining engineers with the niche skills for MLOps, on-premise infrastructure, and AI security is a well-known difficulty. Bridging this talent gap often requires a strategic partner with deep experience in AI strategy and implementation.

Finally, integrating modern AI agents with entrenched legacy platforms, such as mainframes or older SAP instances, can create significant friction. This is where specialized, context-aware tools like an ABAP Copilot are designed to bridge the gap, enabling communication between the new and the old.

| Challenge | Business Impact | Strategic Mitigation |

|---|---|---|

| High Hardware Costs & Scalability | Large upfront CapEx; risk of over/under-provisioning. | Phased investment aligned with use case value; leverage hybrid VPC models for burst capacity. |

| Ensuring High Availability | System downtime leads to business process disruption and lost revenue. | Implement redundant infrastructure, automated failover mechanisms, and proactive performance monitoring. |

| Specialized Talent Gap | Difficulty in hiring and retaining staff; project delays and operational risks. | Partner with specialized enterprise AI consulting firms; focus on upskilling internal IT teams on core concepts. |

| Legacy System Integration | Modern AI cannot communicate with critical older systems, limiting ROI. | Utilize context-aware connectors and specialized agents (e.g., ABAP Copilots) designed for legacy environments. |

This table outlines the primary operational and financial hurdles of on-premise AI deployment and provides actionable strategies for enterprise leaders to de-risk their initiatives.

Governance and Orchestration for Sovereign AI

Deploying a sovereign AI system is not the end of the journey. It is the beginning of a new operational reality that requires continuous governance and orchestration. Maintaining long-term control and compliance is what separates a successful AI program from a risky science project. This is especially true when developing custom AI for regulated industries.

Effective governance rests on several key pillars:

- Human-in-the-Loop (HITL) Gates: True governance means accountability is built in by design. For high-risk or irreversible actions, the system must enforce mandatory human approval checkpoints. This ensures that a human expert always has the final say, preventing automated errors from escalating.

- End-to-End Auditability: Every decision, query, and action taken by the AI system must be logged comprehensively. This creates an immutable audit trail essential for demonstrating compliance with regulations like the EU AI Act. This approach directly supports the principles laid out in the NIST AI Risk Management Framework, which provides a structured process for managing risks. Achieving this level of transparency requires a robust AI governance framework from the outset.

- Enterprise Orchestration Platform: As your AI footprint grows, managing individual agents becomes untenable. A central orchestration platform acts as the command center. It provides a single interface for administrators to deploy, monitor, update, and govern all AI agents across the enterprise, ensuring consistent policy application.

- Automated Policy Enforcement: The orchestration platform should do more than just monitor. It must actively enforce governance. This includes applying security policies in real-time, managing access controls, and using self-healing routines to automatically correct deviations and maintain system health. This proactive stance is what makes sovereign AI manageable at scale.

Ready to move forward?

Stop reading about AI governance. Start implementing it.

Find out exactly where your AI strategy will fail — and get a specific roadmap to fix it.